More Efficient/Faster Average Color of Image

April 2, 2021

Skip to the ‘Juicy Code 🧃’ section if you just want the code and don’t care about the preamble of why you might want this!

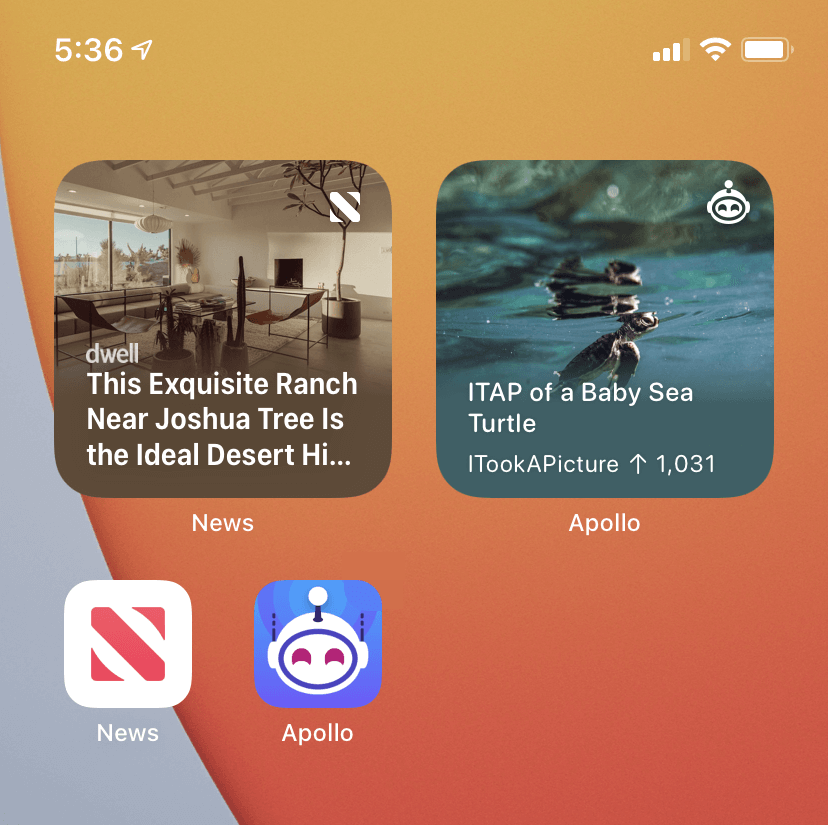

Finding the average color of an image is a nice trick to have in your toolbelt for spicing up views. For instance on iOS, it’s used by Apple to make their pretty homescreen widgets where you put the average color of the image behind the text so the text is more readable. Here’s Apple’s News widget, and my Apollo widget, for instance:

Core Image Approach Pitfalls

There’s lots of articles out there on how to do this on iOS, but all of the code I’ve encountered accomplishes it with Core Image. Something like the following makes it really easy:

func coreImageAverageColor() -> UIColor? {

// Shrink down a bit first

let aspectRatio = self.size.width / self.size.height

let resizeSize = CGSize(width: 40.0, height: 40.0 / aspectRatio)

let renderer = UIGraphicsImageRenderer(size: resizeSize)

let baseImage = self

let resizedImage = renderer.image { (context) in

baseImage.draw(in: CGRect(origin: .zero, size: resizeSize))

}

// Core Image land!

guard let inputImage = CIImage(image: resizedImage) else { return nil }

let extentVector = CIVector(x: inputImage.extent.origin.x, y: inputImage.extent.origin.y, z: inputImage.extent.size.width, w: inputImage.extent.size.height)

guard let filter = CIFilter(name: "CIAreaAverage", parameters: [kCIInputImageKey: inputImage, kCIInputExtentKey: extentVector]) else { return nil }

guard let outputImage = filter.outputImage else { return nil }

var bitmap = [UInt8](repeating: 0, count: 4)

let context = CIContext(options: [.workingColorSpace: kCFNull as Any])

context.render(outputImage, toBitmap: &bitmap, rowBytes: 4, bounds: CGRect(x: 0, y: 0, width: 1, height: 1), format: .RGBA8, colorSpace: nil)

return UIColor(red: CGFloat(bitmap[0]) / 255, green: CGFloat(bitmap[1]) / 255, blue: CGFloat(bitmap[2]) / 255, alpha: CGFloat(bitmap[3]) / 255)

}

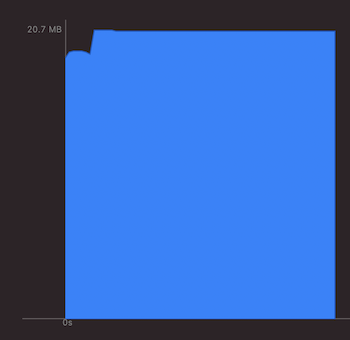

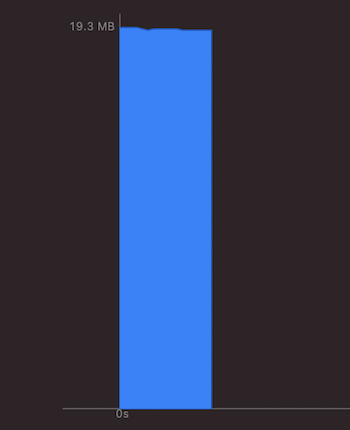

Core Image is a great framework capable of some insanely powerful things, but in my experience isn’t optimal for something as simple as finding the average color of an image because it takes up quite a bit more memory and time, things that you don’t have a lot of when creating widgets. That or I don’t know enough about Core Image (it’s a substantial framework!) to figure out how to optimize the above code (which is entirely possible, but hey the other solution is easier to understand, I think).

You have around 30 MB of headroom with widgets, and from my tests the normal Core Image filter way was taking about 5 MB of memory just for the calculation. That’s about 17% of the total memory you get for the entire widget for a single operation, which could really hurt you if you’re up close to the limit. And you don’t want to break that 30MB limit if you can avoid it, from what I can see it seems iOS (understandably) penalizes you for it, and repeated offenses mean your widget doesn’t get updated as often.

I’m no Core Image expert, but I’m guessing since it’s this super powerful GPU-based framework the memory consumption seems inconsequential when you’re doing crazy realtime image filters or something. But who knows, I’m just going off measurements.

You can see in Xcode’s memory debugger very clearly when Core Image kicks in for instance, causing a little spike, and almost more concerning is that it doesn’t seem to normalize back down any time soon.

(That might not be the most egregious example. It can be worse.)

Just Iterating Over Pixels Approach

An easy approach would just be to iterate over every pixel in the image, add up all their colors, then average them. Downside is there could be a lot of pixels (think of a 4K image), but thankfully for us we can just resize the image down a bunch first (fast), and the “gist” of the color information will be preserved and we have a lot less pixels to deal with.

One other catch is that just ‘iterating over the pixels’ isn’t as easy as it sounds when the image you’re dealing with could be in a variety of different formats, (CMYK, RGBA, ARGB, BBQ, etc.). I came across a great answer on StackOverflow that linked to an Apple Technical Q&A that recommended just drawing out the image anew in a standard format you can always trust, so that solves that.

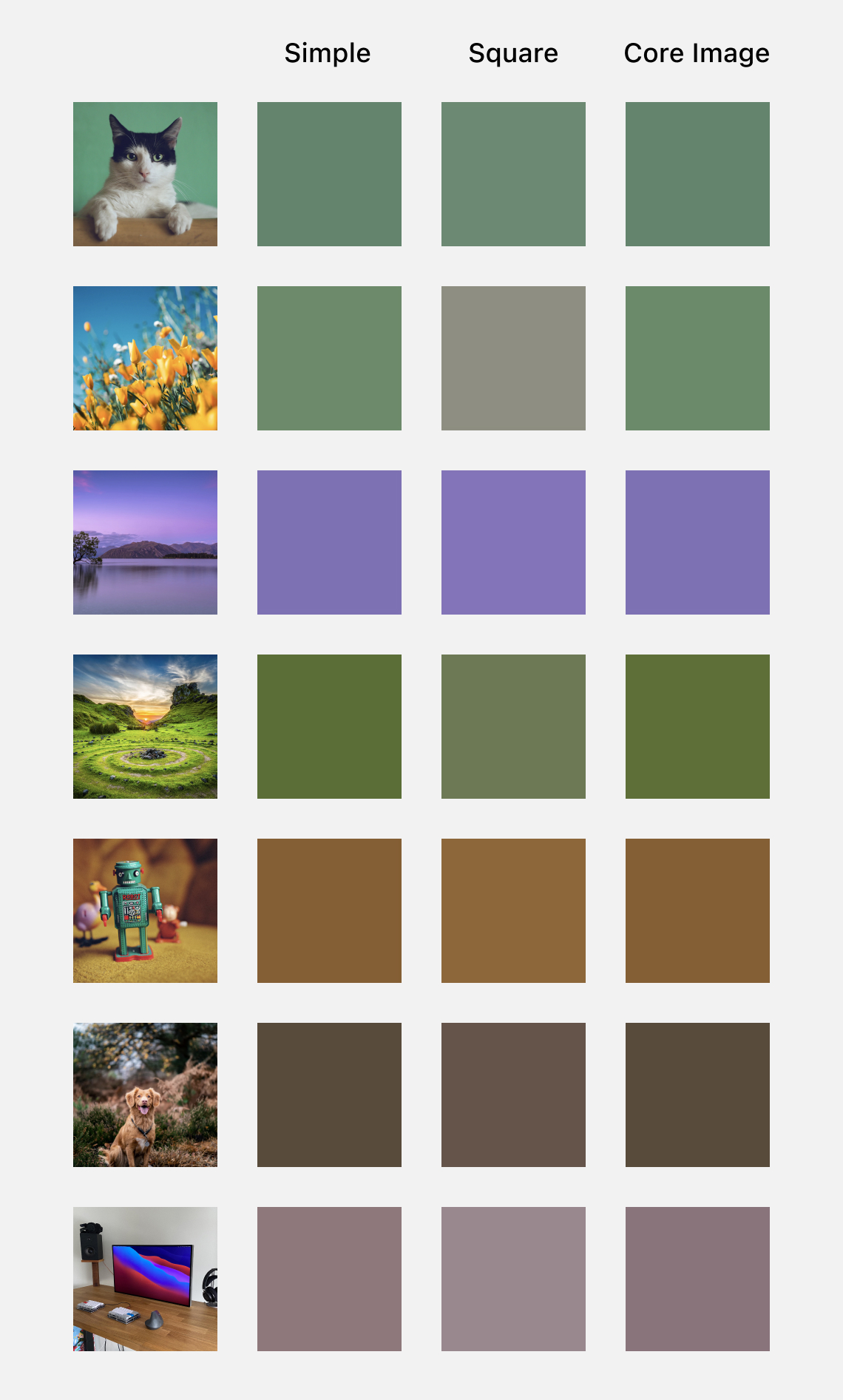

Lastly, there’s some debate over which algorithm is best for averaging out all the colors in an image. Here’s a very interesting blog post that talks about how a sum of squares approach could be considered better. Through a bunch of tests, I see how it could be with approximating a bunch of color blocks of a larger imager, but the ‘simpler’ way by just summing seems to have better color results, and more closely mimics Core Image’s results. The code below includes both options, and I’ll include a comparison table so you can choose for yourself.

The Juicy Code 🧃

Here’s the code I landed on, feel free to change it as you see fit. I like to keep in lots of comments so if I come back to it later I can understand what’s going on, especially when it’s dealing with bitmasking and color profile bit structures and whatnot, which I don’t use often in my day-to-day and requires a bit of a rejogging of the Computer Sciencey part of my brain, and it’s really pretty simple once you read it over.

extension UIImage {

/// There are two main ways to get the color from an image, just a simple "sum up an average" or by squaring their sums. Each has their advantages, but the 'simple' option *seems* better for average color of entire image and closely mirrors CoreImage. Details: https://sighack.com/post/averaging-rgb-colors-the-right-way

enum AverageColorAlgorithm {

case simple

case squareRoot

}

func findAverageColor(algorithm: AverageColorAlgorithm = .simple) -> UIColor? {

guard let cgImage = cgImage else { return nil }

// First, resize the image. We do this for two reasons, 1) less pixels to deal with means faster calculation and a resized image still has the "gist" of the colors, and 2) the image we're dealing with may come in any of a variety of color formats (CMYK, ARGB, RGBA, etc.) which complicates things, and redrawing it normalizes that into a base color format we can deal with.

// 40x40 is a good size to resize to still preserve quite a bit of detail but not have too many pixels to deal with. Aspect ratio is irrelevant for just finding average color.

let size = CGSize(width: 40, height: 40)

let width = Int(size.width)

let height = Int(size.height)

let totalPixels = width * height

let colorSpace = CGColorSpaceCreateDeviceRGB()

// ARGB format

let bitmapInfo: UInt32 = CGBitmapInfo.byteOrder32Little.rawValue | CGImageAlphaInfo.premultipliedFirst.rawValue

// 8 bits for each color channel, we're doing ARGB so 32 bits (4 bytes) total, and thus if the image is n pixels wide, and has 4 bytes per pixel, the total bytes per row is 4n. That gives us 2^8 = 256 color variations for each RGB channel or 256 * 256 * 256 = ~16.7M color options in total. That seems like a lot, but lots of HDR movies are in 10 bit, which is (2^10)^3 = 1 billion color options!

guard let context = CGContext(data: nil, width: width, height: height, bitsPerComponent: 8, bytesPerRow: width * 4, space: colorSpace, bitmapInfo: bitmapInfo) else { return nil }

// Draw our resized image

context.draw(cgImage, in: CGRect(origin: .zero, size: size))

guard let pixelBuffer = context.data else { return nil }

// Bind the pixel buffer's memory location to a pointer we can use/access

let pointer = pixelBuffer.bindMemory(to: UInt32.self, capacity: width * height)

// Keep track of total colors (note: we don't care about alpha and will always assume alpha of 1, AKA opaque)

var totalRed = 0

var totalBlue = 0

var totalGreen = 0

// Column of pixels in image

for x in 0 ..< width {

// Row of pixels in image

for y in 0 ..< height {

// To get the pixel location just think of the image as a grid of pixels, but stored as one long row rather than columns and rows, so for instance to map the pixel from the grid in the 15th row and 3 columns in to our "long row", we'd offset ourselves 15 times the width in pixels of the image, and then offset by the amount of columns

let pixel = pointer[(y * width) + x]

let r = red(for: pixel)

let g = green(for: pixel)

let b = blue(for: pixel)

switch algorithm {

case .simple:

totalRed += Int(r)

totalBlue += Int(b)

totalGreen += Int(g)

case .squareRoot:

totalRed += Int(pow(CGFloat(r), CGFloat(2)))

totalGreen += Int(pow(CGFloat(g), CGFloat(2)))

totalBlue += Int(pow(CGFloat(b), CGFloat(2)))

}

}

}

let averageRed: CGFloat

let averageGreen: CGFloat

let averageBlue: CGFloat

switch algorithm {

case .simple:

averageRed = CGFloat(totalRed) / CGFloat(totalPixels)

averageGreen = CGFloat(totalGreen) / CGFloat(totalPixels)

averageBlue = CGFloat(totalBlue) / CGFloat(totalPixels)

case .squareRoot:

averageRed = sqrt(CGFloat(totalRed) / CGFloat(totalPixels))

averageGreen = sqrt(CGFloat(totalGreen) / CGFloat(totalPixels))

averageBlue = sqrt(CGFloat(totalBlue) / CGFloat(totalPixels))

}

// Convert from [0 ... 255] format to the [0 ... 1.0] format UIColor wants

return UIColor(red: averageRed / 255.0, green: averageGreen / 255.0, blue: averageBlue / 255.0, alpha: 1.0)

}

private func red(for pixelData: UInt32) -> UInt8 {

// For a quick primer on bit shifting and what we're doing here, in our ARGB color format image each pixel's colors are stored as a 32 bit integer, with 8 bits per color chanel (A, R, G, and B).

//

// So a pure red color would look like this in bits in our format, all red, no blue, no green, and 'who cares' alpha:

//

// 11111111 11111111 00000000 00000000

// ^alpha ^red ^blue ^green

//

// We want to grab only the red channel in this case, we don't care about alpha, blue, or green. So we want to shift the red bits all the way to the right in order to have them in the right position (we're storing colors as 8 bits, so we need the right most 8 bits to be the red). Red is 16 points from the right, so we shift it by 16 (for the other colors, we shift less, as shown below).

//

// Just shifting would give us:

//

// 00000000 00000000 11111111 11111111

// ^alpha ^red ^blue ^green

//

// The alpha got pulled over which we don't want or care about, so we need to get rid of it. We can do that with the bitwise AND operator (&) which compares bits and the only keeps a 1 if both bits being compared are 1s. So we're basically using it as a gate to only let the bits we want through. 255 (below) is the value we're using as in binary it's 11111111 (or in 32 bit, it's 00000000 00000000 00000000 11111111) and the result of the bitwise operation is then:

//

// 00000000 00000000 11111111 11111111

// 00000000 00000000 00000000 11111111

// -----------------------------------

// 00000000 00000000 00000000 11111111

//

// So as you can see, it only keeps the last 8 bits and 0s out the rest, which is what we want! Woohoo! (It isn't too exciting in this scenario, but if it wasn't pure red and was instead a red of value "11010010" for instance, it would also mirror that down)

return UInt8((pixelData >> 16) & 255)

}

private func green(for pixelData: UInt32) -> UInt8 {

return UInt8((pixelData >> 8) & 255)

}

private func blue(for pixelData: UInt32) -> UInt8 {

return UInt8((pixelData >> 0) & 255)

}

}

The Results

As you can see, we don’t see any memory spike whatsoever from the call. Yay! If anything, it kinda dips a bit. Did we find the secret to infinte memory?

In terms of speed, it’s also about 4x faster. The Core Image approach takes about 0.41 seconds on a variety of test images, whereas the ‘Just Iterating Over Pixels’ approach (I need a catchier name) only takes 0.09 seconds.

These tests were done on an iPhone 6s, which I like as a test device because it’s the oldest iPhone that still supports iOS 13/14.

Comparison of Colors

Lastly, here’s a quick comparison chart showing the differences between the ‘simple’ summing algorithm, the ‘sum of squares’ algorithm, and the Core Image filter. As you can see, especially for the second flowery image, the ‘simple/sum’ approach seems to have the most desirable results and closely mirrors Core Image.

Okay, that’s all I got! Have fun with colors!